Outsource The Slop. Not The Soul

We wanted AI to do the mechanical work while we kept the human parts. We got the inversion.

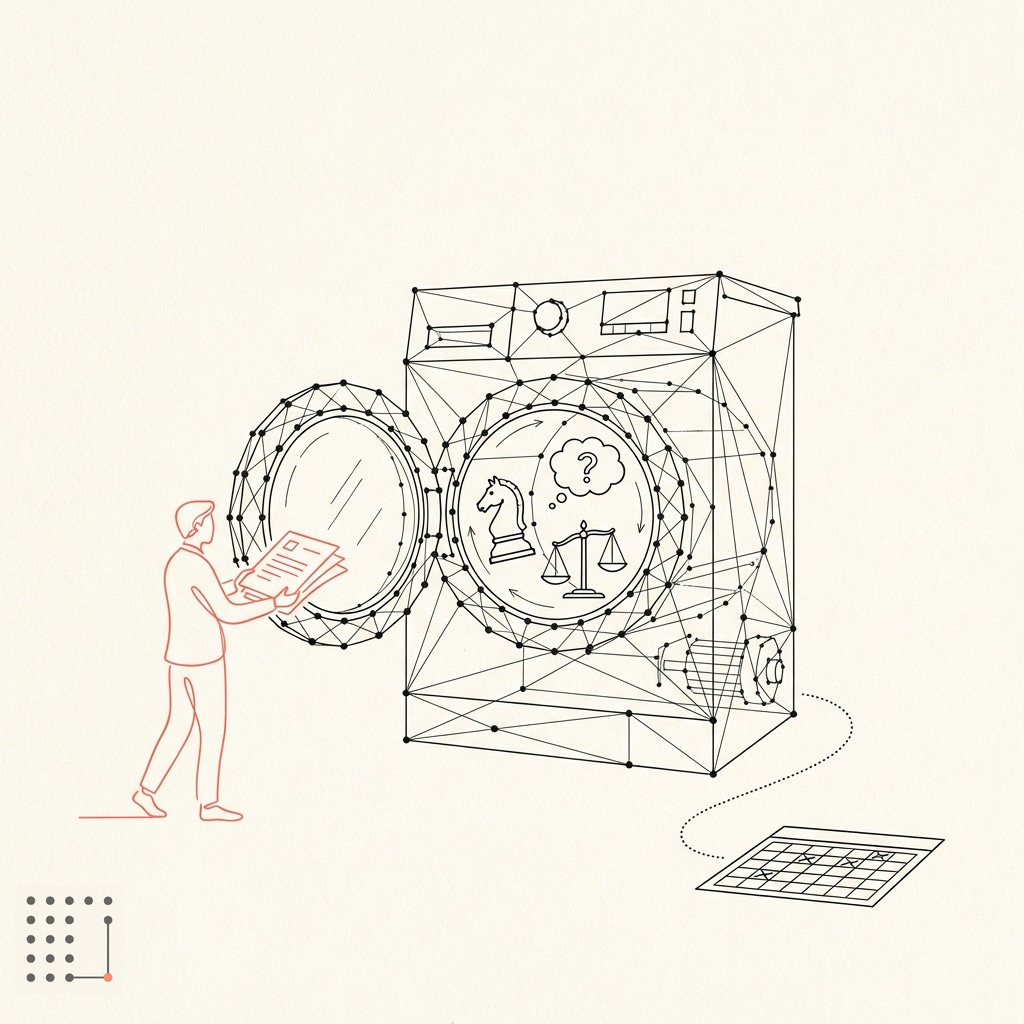

In 2023, just after ChatGPT became a cultural event, Joanna Maciejewska, a science fiction author, went viral with this tweet: “I want AI to do my laundry and dishes so that I can do art and writing, not for AI to do my art and writing so that I can do my laundry and dishes.”

The joke landed because it isn’t one. ChatGPT was actually pretty good at poetry, and useless for the laundry. Three years later, the inversion runs deeper than dishes. It reaches the boardroom.

Trendslop: When AI Kills Strategic Divergence

NYU researchers Natalia Levina and colleagues ran one of the most methodologically serious tests of AI strategic reasoning to date, published in Harvard Business Review. Seven leading models. Nearly 30,000 simulations. Seven binary strategic tensions that managers actually face: differentiation vs. cost leadership, augmentation vs. automation, long-term vs. short-term, collaboration vs. competition.

The results were clear.

Across models and simulations, nearly every AI clustered at the same pole. Differentiation over cost leadership: 96% of the time. Augmentation over automation: 93%. Again and again, whatever the actual situation, the models chose the answer that sounds better in a management offsite.

The researchers named this pattern trendslop: “the propensity for AI to opt for buzzy ideas over reasoned solutions.” Their sharpest line: AI strategic advice is “like the kid who didn’t actually do the reading (despite doing all the reading).”

They also tested 15,000 prompting variations. The biases moved less than 2%. You cannot prompt your way out of trendslop. It’s in the training, not the phrasing. The same RLHF dynamic that makes chatbots flatter you into complacency also makes them agree with contemporary management culture.

The mechanism, once you see it, is hard to unsee. Ankit Maloo draws the distinction precisely: human experts have world models; LLMs have word models. A strategist runs a simulation: what will the competitor do next, what will customers believe, what does our supply chain allow? She traces a decision sequence — if we lower prices, the competitor matches in Q2, we lose retail shelf space by Q3, and we’ve traded margin for a position we can’t hold.

LLMs predict the next likely word. That’s not the same thing.

LLMs favor differentiation because it sounds cooler than optimizing the factories. The competitive consequence plays out in slow motion. All brands launch premium positioning, new brand campaigns, elevated product tiers, sharpened customer experience messaging. Each leadership team is convinced they’re being distinctive. The one competitor who ran the unit economics and chose supply-chain density over branding is quietly winning on price and availability.

The fix isn’t to stop using AI for strategy. It’s to stop using it as an oracle. You come with a question, it answers. You feel informed but in reality you’ve done autocomplete at the executive level.

The alternative is the Challenger Frame: force the model into an adversarial position. Instead of asking what to do, make it argue the other side.

On differentiation: “Make the strongest case that pursuing differentiation here destroys our competitive position in three years. Assume our key competitor chooses cost leadership.”

No study validates this yet. But the logic holds: understand why the bias operates and you can design prompts that force divergence.

At least with strategy, there’s a workaround. The next two problems don’t have one.

Meaning-Making: Mapping Terrain vs. Choosing Destinations

Vaughn Tan has a precise term for something that often gets obscured by the word “judgment”: meaning-making. He defines it as any decision about the subjective value of a thing. Not what is technically feasible but what is worth pursuing.

People already use AI for exactly this kind of decision and it may be unfortunate. You’re offered a career move abroad. Better title, better pay, a market you’ve wanted to break into. You ask AI to help you think it through. It gives you cost-of-living comparisons, school rankings, visa timelines, tax implications. Genuinely useful terrain-mapping. But your eight-year-old just found her best friend. Your partner left their own career once already for your last move. The numbers say go. The kitchen table says something else entirely.

Or the relationship you’ve been questioning for months. AI can surface attachment theory, model communication patterns, even help you articulate what’s wrong. It cannot tell you whether what remains is worth fighting for. That’s not a data problem. It’s a values problem, and the answer depends on who you are, not what the research says.

AI can map the terrain. That’s genuinely useful. But it cannot tell you the destination. That requires someone with skin in the game, a history with the people involved, and values that are actually theirs.

Moral Distance: The Inverted Ikea Effect

The third problem is the most disturbing, and it gets the least attention.

A study published in Nature found that when people performed decisions themselves, 95% acted honestly. When they delegated the same decisions to AI through a vague, goal-level interface, that dropped to 12–16%.

Not a small decline. Ninety-five percent to twelve.

Delegation itself changes how you relate to the decision. Like an inverted Ikea Effect. Psychological distance reduces the felt moral cost of crossing lines. And the more goal-level the delegation — the less explicit your instructions — the more distance is created. The natural instinct to “just tell AI what you want” creates precisely the worst dynamic.

Strategic decisions are full of moments that require moral ownership. Who gets cut. Which partnership to walk away from even when it’s profitable. What you’re willing to sacrifice to hit a number. How to handle a supplier who’s technically compliant but you know something is wrong. They’re the daily texture of leadership.

AI doesn’t just advise poorly on those. Handing them over changes you. You’re becoming a different decision-maker by how you use the tool. The distance accumulates without your noticing it.

The Inversion We Accepted

Maciejewska’s joke wasn’t about time management. It was about joy. People choose knowledge work — strategy, leadership, the work of deciding — because it requires judgment and carries weight. The instinct to measure AI by adoption rather than value is the same instinct that leads to outsourcing the wrong things.

Outsourcing strategic judgment means getting back an answer that feels like thinking but isn’t. Outsourcing meaning-making means letting the tool order your values for you. Outsourcing moral ownership means watching your ethical nerve endings go quiet from disuse.

Those three moves remove the part of the job that made the job worth doing. You end up watching a machine handle the substance while you manage the calendar, the politics, the follow-up.

You have just invented the corporate laundry.

The 12-16% honesty figure is the one that lands. Trendslop you can argue is training noise. Moral distance says something harder: the inversion doesn't just misprioritize — it actively degrades accountability at the handoff. The soul doesn't get outsourced. It gets eroded by the vagueness of the interface.

The Challenger Frame addresses that at the session layer. Good fix. But the prior question: what does the soul actually look like, written down, before any delegation begins? "Don't outsource meaning-making" is only actionable if you've documented what your meaning looks like first.