Ditch the Plan. Find the Loop.

AI doesn’t need your plan, it now designs its own experiments. It just needs your definition of “better.”

Andrej Karpathy pointed an AI coding agent at a training optimization problem. Not a step-by-step plan. Not a list of experiments to run. Just the problem and a way to measure improvement. The agent ran 700 experiments in two days, discovered 20 meaningful improvements, and delivered an 11% performance gain according to Jeremy Kahn at Fortune.

Tobias Lütke, Shopify’s CEO, tried the same approach: he pointed an agent at internal company data with instructions to improve model quality and speed, let it run overnight, and reported that 37 experiments had delivered a 19% gain by morning.

The CEO of a $100 billion company didn’t hand AI a plan. He handed it a problem and let the loop run.

Nobody specified which variables to test or designed an experiment matrix. The agent looked at the code, decided what to change, ran the test, scored the result, and moved on to the next idea it generated itself. Seven hundred times.

We’ve been trained to see AI through the lens of intelligence. But the more consequential capability looks nothing like “our” intelligence. It looks like a cook who tries every seasoning combination in an afternoon. Not because the cook has great taste. Because the cook never stops tasting.

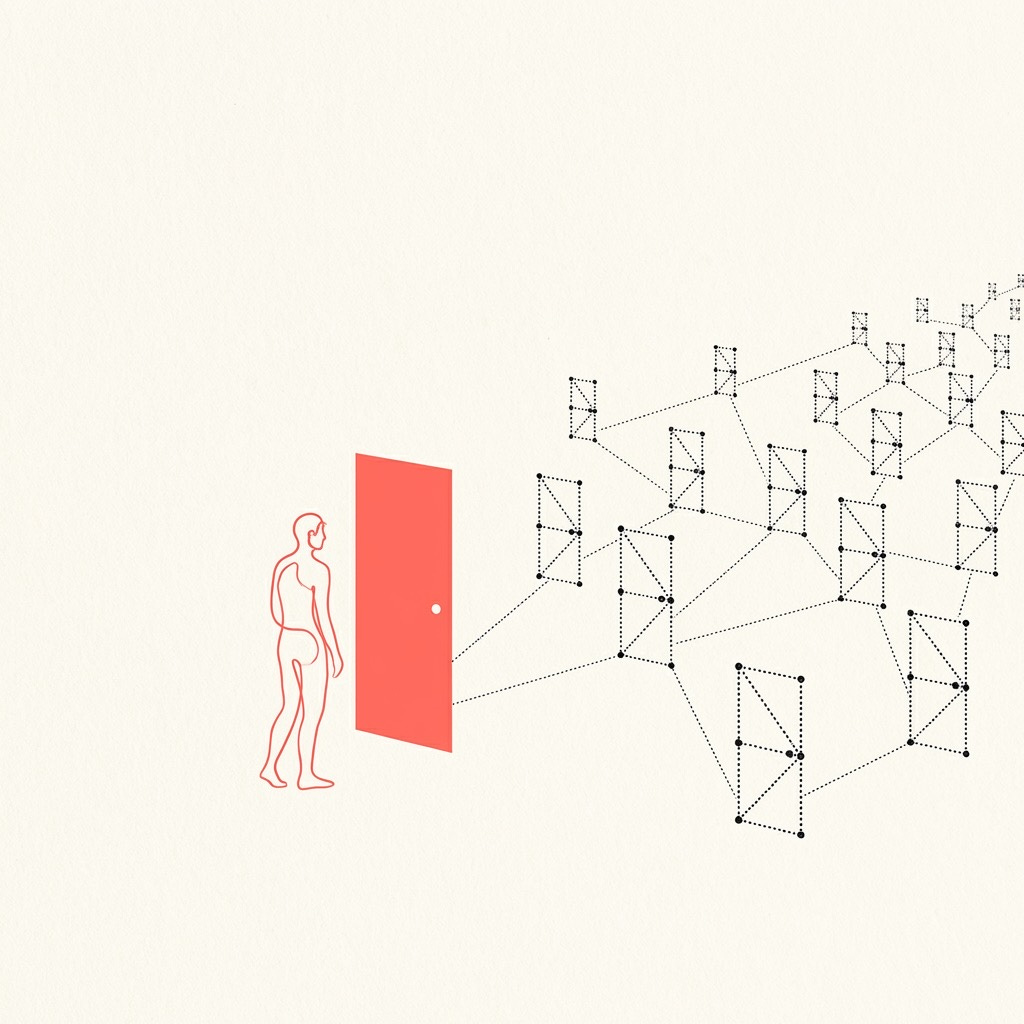

From Plans to Loops

Computers have always been tireless experimenters. Give a machine a thousand things to try, it tries them all without complaint. And once it finds something better, that’s the new floor — no forgetting, no regression, no institutional memory loss. None of this is new.

What’s new is what you need to hand the machine.

Five years ago, you handed it a plan. Here’s the code, execute it. That’s automation.

Two years ago, you could hand it an experiment plan. Test these three headlines against these three images against these three calls to action — 27 combinations. The machine ran the loop , tested, scored, picked the winner. Useful, but the loop was bounded by whoever designed the 27 variants.

Now you hand it a problem. The machine designs the experiments itself.

That’s the shift. Not that the loop runs faster. Not that it costs less. The machine now generates its own experiments, scores its own results, and decides what to try next. The entire loop is self-directed.

So now, the hottest leverage you can have is to find a Loopable Problem: any problem where

experiments can be run in code or simulation,

“better” can be measured by a clear metric, which is called “score function”

failure is cheap enough to try thousands of times.

Again, the critical change isn’t in these three properties, it’s that you no longer need to know what to try. You define the problem and the score function. The machine runs the loop.

The Loop Is Already Running

Google DeepMind’s AlphaEvolve found the first improvement to matrix multiplication algorithms in 56 years. And as it happens, this is one of the most directly consequential findings of AI of the recent years as matrix multiplication is at the core of the mechanism that powers AI. In other words, it found a way to speed up itself. Just a problem, a score function, and a (good) loop.

Sakana AI’s Darwin Gödel Machine (paper) pushed an AI coding agent from 20% to 50% on a standard software engineering benchmark. The method matters: it discovered structural modifications: architectural changes its own prior version couldn’t have conceived, that transferred across programming languages. Not incremental tuning. The machine evolved past its earlier self.

These aren’t isolated demos. They’re the same loop applied at different levels: improve an algorithm, improve the training, improve the architecture itself. Hand the machine a problem with a measurable score. The loop kicks in and after thousands of trials, finds what no human had thought to test.

Which Problems Are Loopable?

That becomes a key question. Code is obvious: it runs fast, metrics are clean, broken tests don’t hurt anyone. But the Loopable Problem reaches beyond the software.

NVIDIA’s NVCell designs chip layouts — physical silicon, a domain where experienced engineers move carefully and incrementally. According to NVIDIA, two GPUs running NVCell accomplish in days what ten engineers do in a year. Give it the problem — optimize this chip layout. Give it the score function — performance benchmarks. The loop generates layout candidates no engineer would have tried, simulates their performance, and iterates.

The same pattern is showing up wherever the three conditions hold: code optimization, algorithm design, chip layout, logistics routing, and — notably — training AI itself. Other domains are converging: materials science, financial model calibration, supply chain configuration. These fields already had simulation and scoring infrastructure, they could evaluate a candidate once they had one. What they lacked was a way to generate diverse, non-obvious candidates at scale. Generative AI closes that gap. Two years ago, each required a specialized team to design the experiment space. Now the AI generates the candidates directly.

Then there are problems that aren’t loopable, and understanding why is a key part of understanding GenAI value.

Strategy, negotiation, creative vision, ethical judgment.

These fail not because AI lacks capability but because the score function is contested. Consider a strategic reorganization: is better measured by revenue, speed, retained talent, or three-year market position? Each is defensible. Each runs the loop in a different direction. When reasonable people can’t agree on what “better” means, no machine-readable finish line exists. A failed negotiation doesn’t revert.

No loop can run without a finish line.

The Executive Filter

For any process in your organization, you may ask three questions.

Can experiments actually be run in code or simulation? Can you define “better” in terms a machine can measure ? And if a thousand experiments fail, does anything break?

Three yeses means you’ve found the loop. Two years ago, even with three yeses, you’d have needed a specialized team to design the experiments and build the evaluation system. Today, the AI generates both. Problems that required months of engineering can now be prototyped in days.

The improvement compounds. Each cycle starts from a higher baseline. The gap between an organization running AI-designed experiments and one running human-designed tests widens with every iteration.

If any answer is no — and particularly the one about your definition of “better” — you’ve found something more interesting than a loopable problem. You’ve found the investment that would create one.

Before generative AI, nobody spent much energy building scoring functions for business processes. Why would you? There was no loop to feed. Defining “better” in machine-readable terms for your ad creative or meeting summary was an academic exercise.

That calculus has flipped. The AI can now generate candidates, run experiments, and iterate autonomously. The only missing piece is the score function — the definition of “better” that the loop needs to run. Which means the highest-leverage investment you can make right now might not be building AI systems at all.

It might be building the scoring function that lets AI loose on a problem you’ve been solving by hand.

The competitor who defines “better” first doesn’t just get ahead. They get a loop that compounds while you’re still deciding what the goal is.

excellent read

The strongest part of this is the boundary you draw. Non-loopable problems aren't waiting for better models. They resist looping because the score function is contested — nobody agrees on what "better" means.

I've been building in that space for months. When "better" depends on judgment that shifts as you evaluate it, you can't define a score function and let the machine iterate. What you can build are constraint files — governance artifacts written from real decisions that force you to articulate what you mean before the system runs. Decision logs. Quality gates. Voice rules extracted from practice.

Some of those eventually harden into score functions. The ones that don't are the actual asset.

The boundary between loopable and non-loopable is where the interesting work lives right now. You mapped one side of it here clearly.